Facebook fact checks for pics and videos in hope to regulate fake news

By Satya Priya BN

Hyderabad: Facebook is a popular social networking site that allows people from around the globe to connect with others. The network also offers a platform for third-party developers. It also helps people find news that’s most important and meaningful to them. However, the biggest threat to this social networking site is the spread of misinformation on the platform.

While some spread false-news unintentionally, sometimes it is spread deliberately to drive clicks and yield profits. It is also used to spread misinformation against someone to harm them personally or politically.

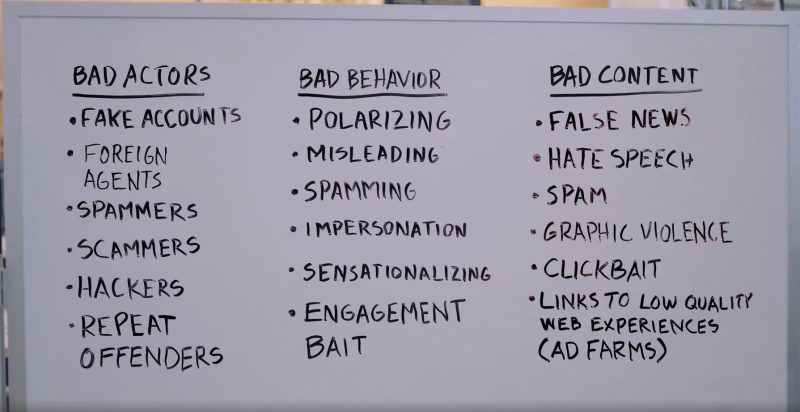

Here are various ways in which misleading content spreads:

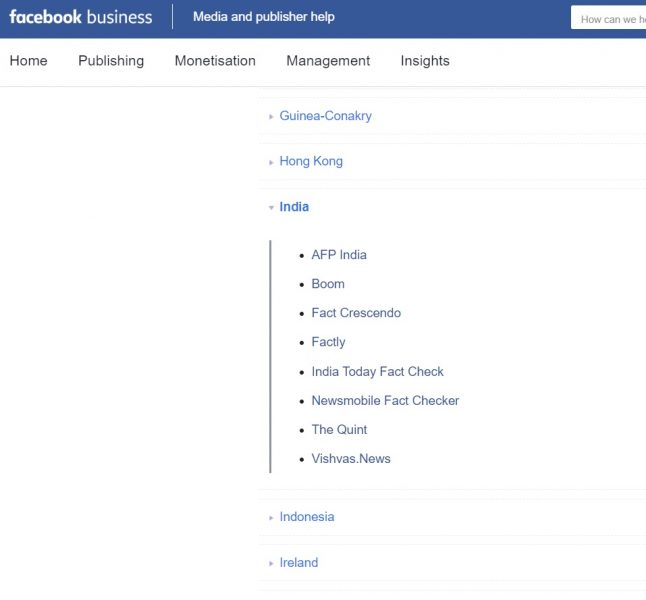

Along with countries like America, France, Italy, the Netherlands, Germany, Mexico, Indonesia, and the Philippines, the social media giant is adapting strict measures to fight misinformation in India.

Facebook first implemented its third-party fact-checking process in 2016, but thus far, that initiative has only focused on articles with misleading content. Now, to limit spreading false news, Facebook is working extensively with AI and machine learning, as well as third-party fact-checkers. The social network is now seen expanding fact-checking for photos and videos shared on the platform.

They have developed a machine-learning model that uses various engagement signals, including feedback from people to identify potentially malicious content. These photos and videos are then sent to the partner fact-checkers for a review. The expert third-party fact-checkers evaluate the given pictures and videos using visual verification techniques such as reverse image search, image metadata analysis. They also rely on analysing date and time of the content.

Fact-checkers assess the credibility of the image or video shared by the combined use of these techniques along with other journalistic practices. The latter include expert research, academic extracts or information from government agencies.

Here is the list of the third-party fact-checking websites in India:

Facebook is also getting hold of other technologies like Optical character Recognition (OCR) to recognise false news better. OCR is used to extract text from images, which is compared to headlines and text from fact-checkers’ articles.

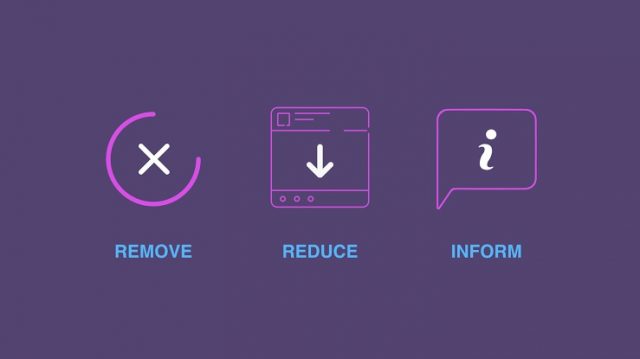

The strategy of Facebook to stop misinformation has been to ‘Remove, Reduce, and Inform’.

- Remove the accounts and content that violate community standards or ad policies.

- Reduce the distribution of false news and inauthentic content

- Inform people by giving them more contexts on the posts they see

This approach uproots the actions of those who frequently spread false news. For example, a Facebook page about an Indian celebrity but being operated from America violates the company’s requirements. Therefore, the page will be taken down, deleting all of its posts.

It also decreases the reach of these false stories. Machine learning is helping the Facebook team to detect spam. This spam circulation results in earning money. Therefore, social media intends to reduce this circulation so that these spams are rendered unprofitable.

When fact-checking organisations rate news as false, the story gets low priority on the news feed, and the views are cut down by 80%. A page or a person who repeatedly spreads such news will have their advertising rights removed, distribution of their feed reduced and the opportunities to monetise restricted.

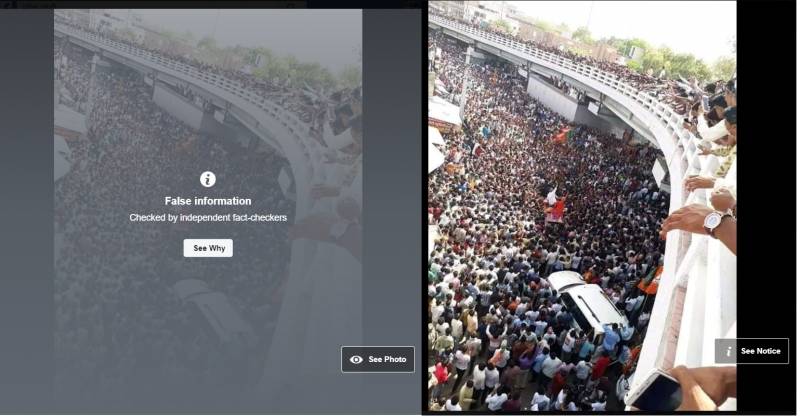

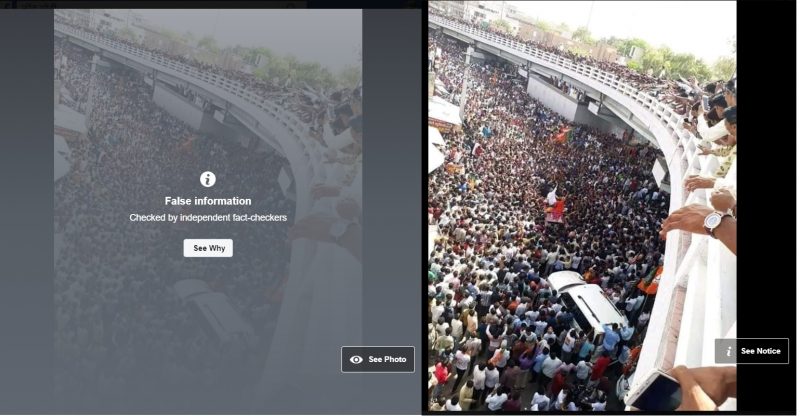

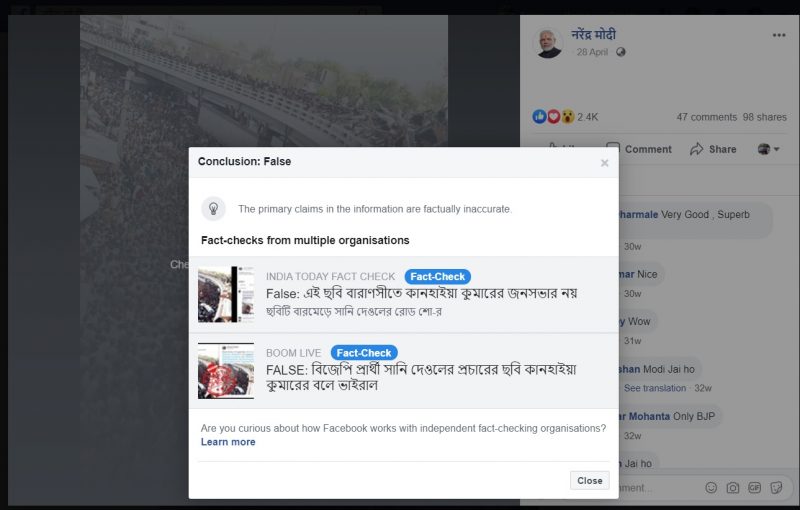

Over the few days, we can see Facebook tag a few stories as false information, with a clause saying ‘checked by independent fact-checkers’ and a button which says ‘See why’. On clicking the button, we can see a link to the article published by the fact-checking organisation. This process was started very recently, and even the old misleading posts that attracted a lot of activity are being reviewed and tagged as false information.

Facebook has also recently published a few tips to spot false news and a detailed report on how the company is addressing fake news. The report states the measures taken by Facebook to stop the spread of false news on its platform and the measures users can choose to stop this.

Facebook also assures users that they are committed to fighting false news on a long-term basis using technology and partnerships to stay ahead of spammers in the future.

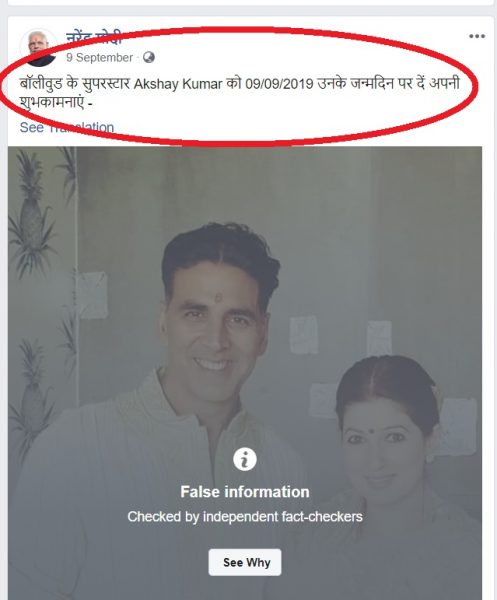

But there seems to be a bug in the AI and ML tool used, if the image used in a Fact-checked false news has been re-used to post any other news, it is being tagged as false information, even though the text shared along is different.

Looks like, though its attempt is quite extensive, Facebook would have to foolproof its platform from various different aspects. Hope it succeeds in this attempt.